Augmented Human Communication

Research Staff

-

Professor

Satoshi NAKAMURA -

Associate Professor

Katsuhito SUDOH -

Assistant Professor

Hiroki TANAKA -

Assistant Professor

Seitaro SHINAGAWA -

Affiliate Associate Professor

Sakriani Sakti -

Affiliate Associate Professor

Keiji YASUDA

Go Beyond the Communication Barrier

The AHC Laboratory pursues research to solve problems related to human communication based on speech and language, paralanguage, and non-verbal information. By applying various artificial intelligence technologies including deep learning, our lab is pursuing tasks that were previously not able to be solved. Additionally, we seek knowledge related to human cognitive functions, as well as new information through brain measurement, and use it to perform research. Especially in research activities, we focus not only on theoretical aspects, but also on the applicability of technology, and aim at building prototype systems and validation. Below you can find our research areas.

Research Areas

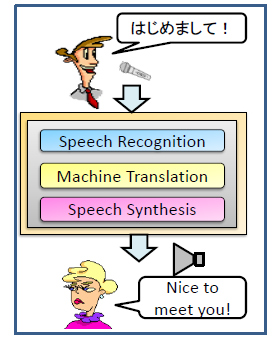

Simultaneous speech translation

Our research focuses on simultaneous speech translation, its evaluation, technologies supporting interpreters, and the use of multimodal information. (Fig.1)

Natural language processing

Our research focuses on language processing problems for both spoken and written languages such as machine translation, text generation, error correction, and representation learning.

Multi-lingual statistical speech processing

Speech recognition and synthesis are fundamental technologies for realizing natural human-computer interaction. We study statistical methodologies such as hidden Markov models, Gaussian mixture models, deep neural networks, and recurrent neural networks. We are extending these models for emotional, conversational spontaneous, and multilingual speech.

Goal-oriented and chatbot-type dialog systems

We focus on new statistical dialogue models for natural dialogue using verbal information, intonation, emotion, face and gesture information. Dialogue models are realized by machine learning techniques such as multi-modal unification and interactive transformation with deep learning.

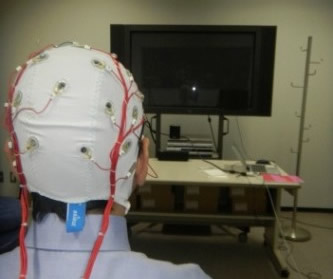

Brain analysis for verbal and non-verbal communication

Our research on cognitive communication analyzes brain activity to detect real-time communication difficulty using Electroencephalograms (EEG). We also perform research on support for communication disabilities such as autism and dementia.(Fig.2)