Multimodal Environment Recognition Laboratory (RIKEN)

Research Staff

-

Professor

Yasutomo KAWANISHI -

Assistant Professor

Motoharu SONOGASHIRA

Research Areas

We research and develop signal processing and pattern recognition systems that use various sensor data of robots to recognize people and objects around the robot in detail. In particular, we are working on the 3D understanding of the surrounding environment, recognition/tracking of objects in the environment, detailed understanding of people around the robot, and multi-modal sensor fusion.

3D Scene Understanding

Autonomous robots in the real world must correctly recognize their surrounding environment in 3D. We are researching 3D world reconstruction and 3D object detection/tracking based on RGB-D sensor data.

Open-set Recognition for Unknown Objects

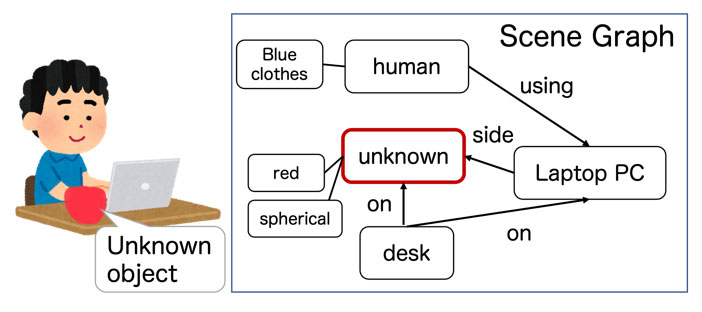

When we humans see an unknown object, we can recognize it even if we do not know it and explain its relationship to other things such as "on the desk" or "beside the chair". On the other hand, a robot can only detect objects that its object detector has trained to detect, and it cannot estimate their relationships to other objects. Therefore, we are researching methods to detect unknown objects (Open-set Recognition) and recognize them, including their relationships with surrounding objects (Fig.1).

Fig. 1 Open-set scene graph generation with unknown objects

Human Understanding

For a robot to assist a person, it needs to recognize various information such as who the person is, what the person is doing, and what state the person is in. In addition, it is necessary not only to understand the current situation but also to predict what the user will do shortly. We are researching estimation of a person's state and predicting behavior based on human skeletal data.

Integration of Multiple Modalities

Humans observe the environment using all five senses, not just one modality. We are studying not only image sensors but also the combination of multiple modalities for various recognition applications, such as human emotion recognition.

Key Features

The Multimodal Environment Recognition Laboratory is a collaborative laboratory with the Multimodal Data Recognition Research team in RIKEN Guardian Robot Project. Our laboratory focuses on environmental understanding in situations where robots and humans coexist, using data obtained from cameras and other sensors. Students will be assigned a research theme related to this field according to their interests, supervised by faculty members. Through discussions in individual meetings and participation in team meetings in RIKEN, students will be trained in their ability to clarify the research's key points and explain them logically. Students will also be trained in writing and presentation skills through trying to international conferences.